Problém: Vzhľadom na 2 proces I a J musíte napísať program, ktorý môže zaručiť vzájomné vylúčenie medzi nimi bez ďalšej hardvérovej podpory.

Plytvanie cyklom CPU

Z hľadiska laikov, keď vlákno čakalo na jeho otáčanie, skončilo dlhou slučkou, ktorá testovala stav miliónov krát za sekundu, čím robil zbytočný výpočet. Existuje lepší spôsob, ako čakať a je známy ako 'výnos' .

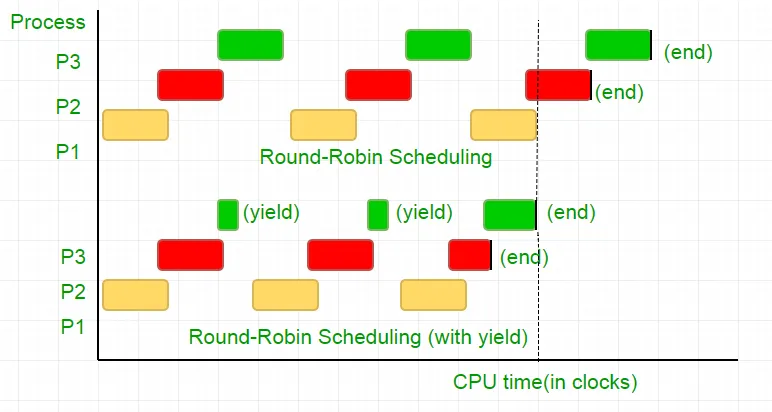

Aby sme pochopili, čo to robí, musíme hlboko vykopať, ako plánovač procesu funguje v systéme Linux. Tu uvedená myšlienka je zjednodušená verzia plánovača, že skutočná implementácia má veľa komplikácií.

Zvážte nasledujúci príklad

Existujú tri procesy P1 P2 a P3. Proces P3 je taký, že má chvíľu slučku podobnú kódu v našom kóde, ktorý nie je taký užitočný výpočet a existuje z slučky iba vtedy, keď P2 dokončí svoje vykonanie. Plánovač ich umiestni do okrúhleho frontu Robin. Teraz povedzme, že rýchlosť hodín procesora je 1000000/s a prideľuje 100 hodín každému procesu v každej iterácii. Potom sa najprv P1 spustí počas 100 hodín (0,0001 sekundy), potom P2 (0,0001 sekundy), po ktorom nasleduje P3 (0,0001 sekundy), pretože už neexistujú žiadne ďalšie procesy, keď sa tento cyklus opakuje až do konca P2 a potom nasleduje vykonanie P3 a nakoniec jeho ukončenie.

Jedná sa o úplný odpad zo 100 cyklov CPU. Aby sme tomu zabránili, sa vzájomne vzdáme časového plátku CPU, t. J. Výťažok, ktorý v podstate končí tento časový kúsok a plánovač vyberie ďalší proces na spustenie. Teraz testujeme náš stav, potom sa vzdáme CPU. Vzhľadom na náš test trvá 25 hodinových cyklov, ušetríme 75% nášho výpočtu v časovom pláne. Graficky to dať

Berúc do úvahy rýchlosť hodín procesora ako 1 MHz, je to veľa úspor!.

Rôzne distribúcie poskytujú rôzne funkcie na dosiahnutie tejto funkcie. Linux poskytuje Sched_yield () .

void lock(int self) { flag[self] = 1; turn = 1-self; while (flag[1-self] == 1 && turn == 1-self) // Only change is the addition of // sched_yield() call sched_yield(); }

Pamäťový plot.

Kód v predchádzajúcom návode mohol pracovať na väčšine systémov, ale nebol 100% správny. Logika bola perfektná, ale najmodernejšia CPU zamestnáva optimalizácie výkonu, ktoré môžu mať za následok vykonanie mimo objednávky. Toto prerobenie operácií pamäte (zaťaženia a sklady) sa zvyčajne bez povšimnutia v rámci jedného vlákna vykonávania, ale môže spôsobiť nepredvídateľné správanie v súbežných programoch.

Zvážte tento príklad

while (f == 0); // Memory fence required here print x;

Vo vyššie uvedenom príklade kompilátor považuje tieto 2 vyhlásenia za nezávislé od seba, a tak sa snaží zvýšiť efektívnosť kódu ich opätovným objednaním, čo môže viesť k problémom so súbežnými programami. Aby sme tomu zabránili, umiestnime pamäťový plot, ktorý dáva kompilátorovi náznak o možnom vzťahu medzi vyhláseniami cez bariéru.

Takže poradie vyhlásení

vlajka [self] = 1;

Turn = 1-Self;

zatiaľ čo (kontrola stavu otáčania)

výnos ();

Musí byť presne rovnaké, aby zámok fungoval, inak skončí v stave slepej uličky.

Zabezpečiť, aby tento kompilátory poskytol pokyn, ktorý zabraňuje objednávaniu vyhlásení v tejto bariére. V prípade GCC jeho __sync_synchronize () .

Takže upravený kód sa stáva

Úplná implementácia v C:

// Filename: peterson_yieldlock_memoryfence.cpp // Use below command to compile: // g++ -pthread peterson_yieldlock_memoryfence.cpp -o peterson_yieldlock_memoryfence #include

// Filename: peterson_yieldlock_memoryfence.c // Use below command to compile: // gcc -pthread peterson_yieldlock_memoryfence.c -o peterson_yieldlock_memoryfence #include

import java.util.concurrent.atomic.AtomicInteger; public class PetersonYieldLockMemoryFence { static AtomicInteger[] flag = new AtomicInteger[2]; static AtomicInteger turn = new AtomicInteger(); static final int MAX = 1000000000; static int ans = 0; static void lockInit() { flag[0] = new AtomicInteger(); flag[1] = new AtomicInteger(); flag[0].set(0); flag[1].set(0); turn.set(0); } static void lock(int self) { flag[self].set(1); turn.set(1 - self); // Memory fence to prevent the reordering of instructions beyond this barrier. // In Java volatile variables provide this guarantee implicitly. // No direct equivalent to atomic_thread_fence is needed. while (flag[1 - self].get() == 1 && turn.get() == 1 - self) Thread.yield(); } static void unlock(int self) { flag[self].set(0); } static void func(int s) { int i = 0; int self = s; System.out.println('Thread Entered: ' + self); lock(self); // Critical section (Only one thread can enter here at a time) for (i = 0; i < MAX; i++) ans++; unlock(self); } public static void main(String[] args) { // Initialize the lock lockInit(); // Create two threads (both run func) Thread t1 = new Thread(() -> func(0)); Thread t2 = new Thread(() -> func(1)); // Start the threads t1.start(); t2.start(); try { // Wait for the threads to end. t1.join(); t2.join(); } catch (InterruptedException e) { e.printStackTrace(); } System.out.println('Actual Count: ' + ans + ' | Expected Count: ' + MAX * 2); } }

import threading flag = [0 0] turn = 0 MAX = 10**9 ans = 0 def lock_init(): # This function initializes the lock by resetting the flags and turn. global flag turn flag = [0 0] turn = 0 def lock(self): # This function is executed before entering the critical section. It sets the flag for the current thread and gives the turn to the other thread. global flag turn flag[self] = 1 turn = 1 - self while flag[1-self] == 1 and turn == 1-self: pass def unlock(self): # This function is executed after leaving the critical section. It resets the flag for the current thread. global flag flag[self] = 0 def func(s): # This function is executed by each thread. It locks the critical section increments the shared variable and then unlocks the critical section. global ans self = s print(f'Thread Entered: {self}') lock(self) for _ in range(MAX): ans += 1 unlock(self) def main(): # This is the main function where the threads are created and started. lock_init() t1 = threading.Thread(target=func args=(0)) t2 = threading.Thread(target=func args=(1)) t1.start() t2.start() t1.join() t2.join() print(f'Actual Count: {ans} | Expected Count: {MAX*2}') if __name__ == '__main__': main()

class PetersonYieldLockMemoryFence { static flag = [0 0]; static turn = 0; static MAX = 1000000000; static ans = 0; // Function to acquire the lock static async lock(self) { PetersonYieldLockMemoryFence.flag[self] = 1; PetersonYieldLockMemoryFence.turn = 1 - self; // Asynchronous loop with a small delay to yield while (PetersonYieldLockMemoryFence.flag[1 - self] == 1 && PetersonYieldLockMemoryFence.turn == 1 - self) { await new Promise(resolve => setTimeout(resolve 0)); } } // Function to release the lock static unlock(self) { PetersonYieldLockMemoryFence.flag[self] = 0; } // Function representing the critical section static func(s) { let i = 0; let self = s; console.log('Thread Entered: ' + self); // Lock the critical section PetersonYieldLockMemoryFence.lock(self).then(() => { // Critical section (Only one thread can enter here at a time) for (i = 0; i < PetersonYieldLockMemoryFence.MAX; i++) { PetersonYieldLockMemoryFence.ans++; } // Release the lock PetersonYieldLockMemoryFence.unlock(self); }); } // Main function static main() { // Create two threads (both run func) const t1 = new Thread(() => PetersonYieldLockMemoryFence.func(0)); const t2 = new Thread(() => PetersonYieldLockMemoryFence.func(1)); // Start the threads t1.start(); t2.start(); // Wait for the threads to end. setTimeout(() => { console.log('Actual Count: ' + PetersonYieldLockMemoryFence.ans + ' | Expected Count: ' + PetersonYieldLockMemoryFence.MAX * 2); } 1000); // Delay for a while to ensure threads finish } } // Define a simple Thread class for simulation class Thread { constructor(func) { this.func = func; } start() { this.func(); } } // Run the main function PetersonYieldLockMemoryFence.main();

// mythread.h (A wrapper header file with assert statements) #ifndef __MYTHREADS_h__ #define __MYTHREADS_h__ #include

// mythread.h (A wrapper header file with assert // statements) #ifndef __MYTHREADS_h__ #define __MYTHREADS_h__ #include

import threading import ctypes # Function to lock a thread lock def Thread_lock(lock): lock.acquire() # Acquire the lock # No need for assert in Python acquire will raise an exception if it fails # Function to unlock a thread lock def Thread_unlock(lock): lock.release() # Release the lock # No need for assert in Python release will raise an exception if it fails # Function to create a thread def Thread_create(target args=()): thread = threading.Thread(target=target args=args) thread.start() # Start the thread # No need for assert in Python thread.start() will raise an exception if it fails # Function to join a thread def Thread_join(thread): thread.join() # Wait for the thread to finish # No need for assert in Python thread.join() will raise an exception if it fails

Výstup:

Thread Entered: 1

Thread Entered: 0

Actual Count: 2000000000 | Expected Count: 2000000000